When folks ask us about what a largish SCORM Engine environment looks like, we often tell them about SCORM Cloud. Cloud serves between 27K and 32K createRegistration calls per day. During the busier hours, we serve between 70 and 90 course launches per minute (about 5100 per hour). That's a lot of registrations and launches, and the data Cloud generates is instructive to anyone that's running SCORM Engine at scale.

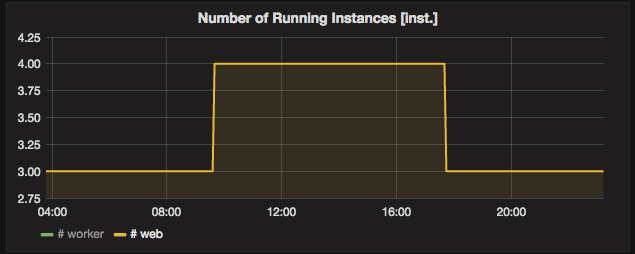

We run SCORM Cloud on top of Amazon Web Services’s infrastructure. SCORM Cloud’s web servers sit behind an Elastic Load Balancer and auto-scale based on usage. We currently run a minimum of three m3.xlarge instances, and we allow the group to scale as high as 9 instances. On a typical busy day, we scale up to 4 (rarely, 5) instances. On an extremely busy day, our most aggressive rules kick in and scale out to 9 instances, even though 9 is not usually strictly necessary. AWS makes it cheap to scale out fast, since resources are billed by the hour. For an overview of what our AWS infrastructure looks like under the hood, please refer to this article.

We have a three-server minimum for availability. We selected the m3.xlarge instance size such that if two servers fail, the remaining server(s) could (albeit at a struggle) pick up the remaining load.

SCORM Cloud is a monolithic application that mostly runs on the same set of servers. Approximately 80% of (non-idle) server time is spent processing SCORM Engine requests.

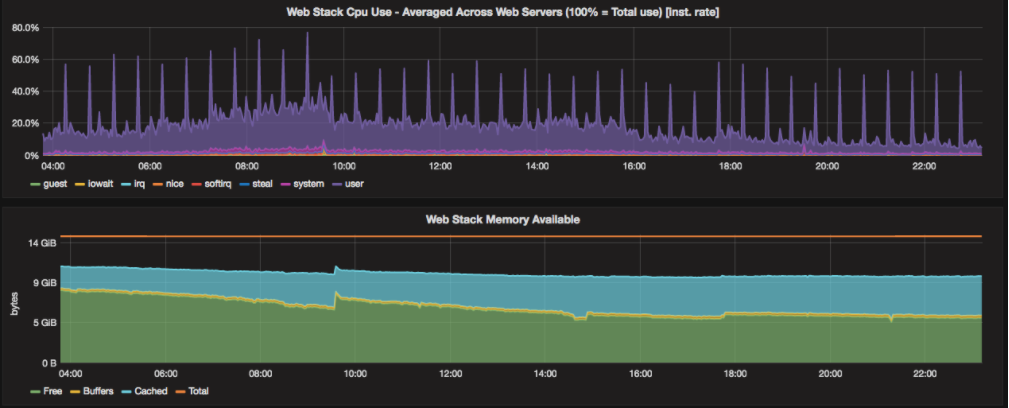

The following documents focus specifically on SCORM Cloud’s web servers, which is most appropriate for a guide on sizing servers for Engine. If you plan on enabling SCORM to xAPI conversion, that will impose a performance penalty (in our experience, approx. +5% to +10% CPU use) that is not reflected in the figures below.

Web Application Server Usage and Load:

The following graphs show typical server usage on a moderately busy day (times are shown in CDT). In this case, we scale out to four m4.xlarge instances which serve web traffic via Apache and Tomcat (Apache is acting as a proxy for Tomcat via mod_jk).

The scale-out here was triggered by CPU use.

The high CPU spikes are from SCORM Cloud application code, not SCORM Engine. The “regular” CPU use here is about 30%. On AWS, m3.xlarge instances have 15 GiB system memory, most of which is available (we selected the instance size mostly based on its allocated CPU resources, not memory).

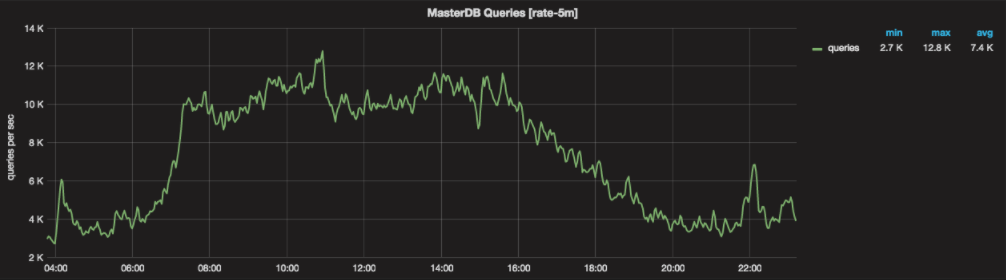

Database Use:

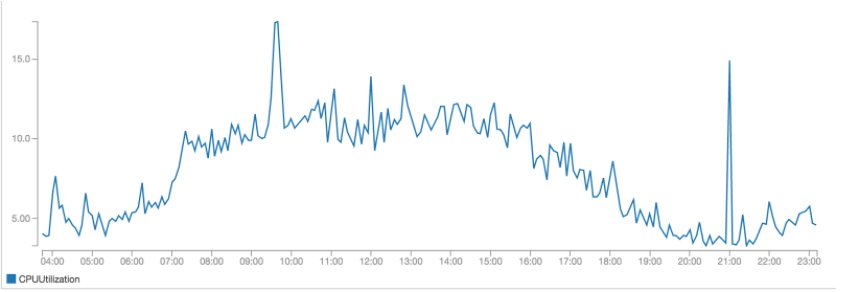

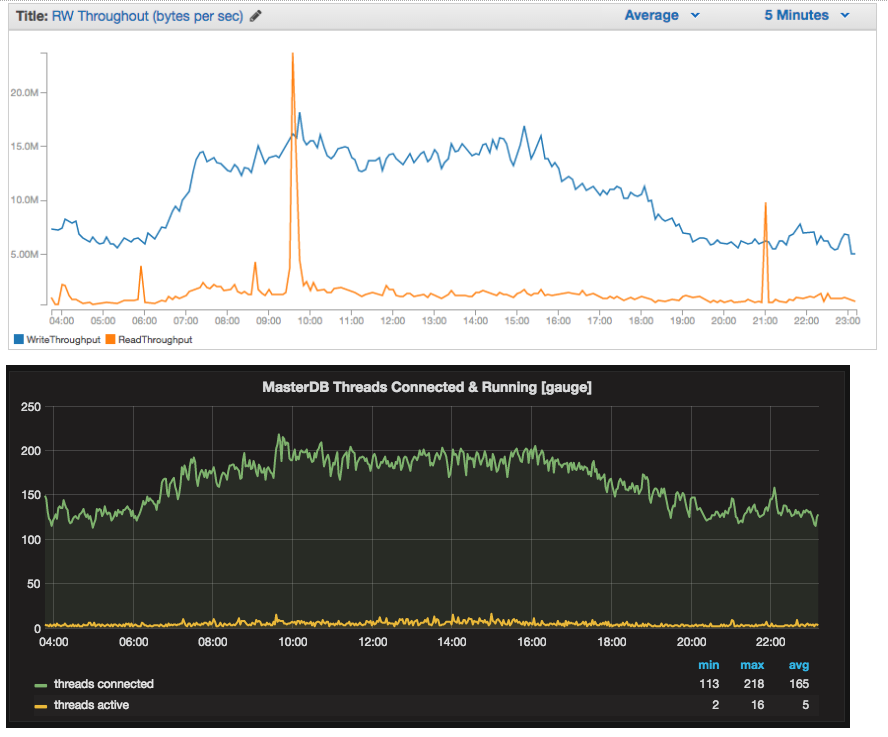

The below charts are typical of database load on our master database server. Our master server currently runs on a db.r3.4xlarge (multi-AZ) Aurora instance. We have purposely overbuilt our database servers to ensure that they are never operating under stress, even when subject to peak load.

For availability, AWS maintains replicas in two separate availability zones and will perform automated failover.

The graph shows an average of 7.4K queries per second (the “rate-5m” in the title indicates that the rate is calculated using 5 minute buckets, but the rate is still per-second).

The SCORM Cloud master DB usually peaks at ~18% CPU with regular traffic pushing it to 12.5% on average.

IOPS are pretty low on the master DB, since it’s able to keep the really active tables in memory.

Our app servers have a database connection thread pool configured with a maximum of 64 connections per server (using HikariCP).

Request Volume:

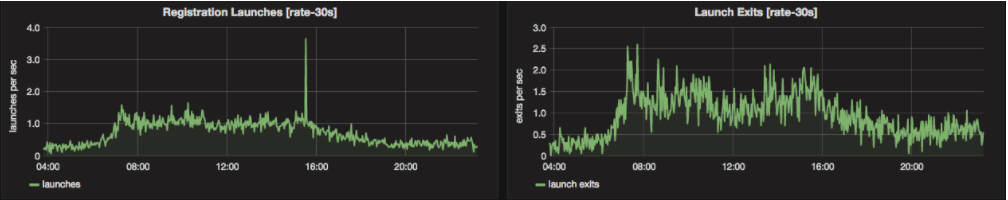

On a typical day, SCORM Cloud serves between 27K and 32K createRegistration calls. During the busier hours, we serve between 70 and 90 course launches per minute (about 5100 per hour). In the off-hours, we drop to about 18 launches per minute (about 1000 per hour).

Course launches are the most bandwidth intensive activities SCORM Cloud undergoes. 24 hour outbound bandwidth usage is typically within the 1 TB to 1.5 TB range.

HTTP Request Patterns:

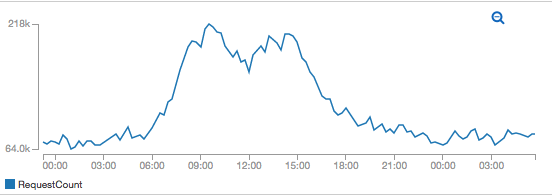

Cloud currently serves an average of 220 HTTP requests per second (13.2K per minute) and spikes as high as 275 requests per second (16.5K per minute). Approximately 60% (~8K) of these requests don’t touch the application server (Tomcat) at all — they are requests for either course content or static content. The other 40% (~5.2K) are handled by Tomcat.

We experience a sharp spike of traffic at the beginning of the work day, a lull during typical lunch hours, and another sharp spike after lunch. For many e-learning applications, this general pattern will hold.

We hope this data proves useful to you as you plan your deployment. Please don’t hesitate to contact us at support@rusticisoftware.com with any questions you may have.